Above is a recent update on GPT from its creators OpenAI and its progress on becoming a more competent AI for the masses. We see in this short ad, how Chat GPT has been remodeled to be “Safer and more aligned” as we recently seen outbursts of how nefarious AI/ ChatGPT can become.

We see news articles that proclaim that AI will replace and destroy human art and ingenuity. Articles such in the Atlantic, AI is Ushering in a Textpocalypse, the first world problems and fear mongering is abundant as many writers fear that AI will rapidly usher in an AI generated response.

“Whether or not a fully automated textpocalypse comes to pass, the trends are only accelerating. From a piece of genre fiction to your doctor’s report, you may not always be able to presume human authorship behind whatever it is you are reading. Writing, but more specifically digital text—as a category of human expression—will become estranged from us.”

https://www.theatlantic.com/technology/archive/2023/03/ai-chatgpt-writing-language-models/673318/

This fear of AI replacing low effort posts is truly a first world problem. There is so much precarity on what medium and jobs will be lost due to ChatGPT/AI

I had a wonderful chat about ChatGPT with classmate Tuka, we spoke about how people are potentially focusing on the wrong thing at the moment and that not many people know how these AI models are made.

Seeing the recent ad made me think of a question:

How has Open AI made GPT more “Safer and aligned”?

https://time.com/6247678/openai-chatgpt-kenya-workers/

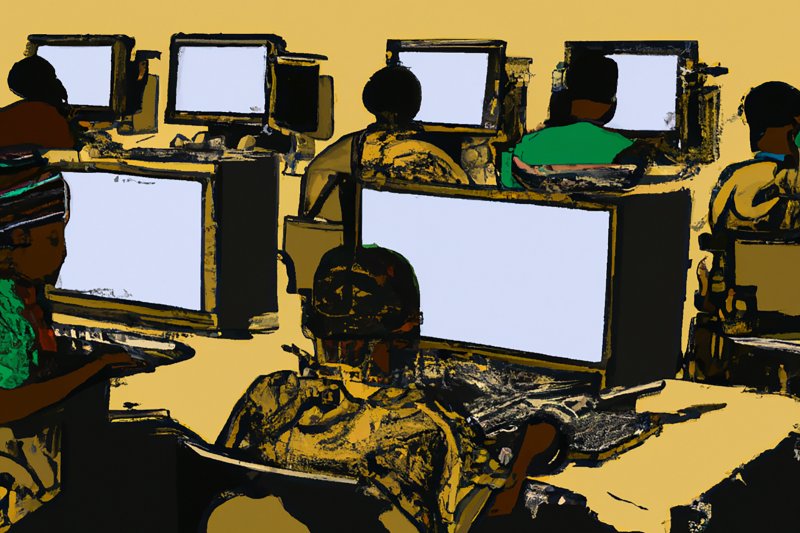

In this time article, we can uncover how the sausage was being made. OpenAI outsourced many of the filtering to humans instead.

“To build that safety system, OpenAI took a leaf out of the playbook of social media companies like Facebook, who had already shown it was possible to build AIs that could detect toxic language like hate speech to help remove it from their platforms. The premise was simple: feed an AI with labeled examples of violence, hate speech, and sexual abuse, and that tool could learn to detect those forms of toxicity in the wild. That detector would be built into ChatGPT to check whether it was echoing the toxicity of its training data, and filter it out before it ever reached the user. It could also help scrub toxic text from the training datasets of future AI models.

To get those labels, OpenAI sent tens of thousands of snippets of text to an outsourcing firm in Kenya, beginning in November 2021. Much of that text appeared to have been pulled from the darkest recesses of the internet. Some of it described situations in graphic detail like child sexual abuse, bestiality, murder, suicide, torture, self harm, and incest.”

https://time.com/6247678/openai-chatgpt-kenya-workers/

the outcry is being misplaced online in my opinion. I cant imagine the trauma many of these individuals are suffering in order to filter out the worst of humanity.

Nelson, excellent investigating and really thoughtful engagement with what’s at stake here. Looking forward to talking more in a bit.